Unicode Normalization in Ruby

I recently published an article in which I tested most of Ruby's string methods with certain Unicode characters to see if they would behave unexpectedly. Many of them did.

One criticism that a few people had of the article was that I was using unnormalized strings for testing. Frankly, I was a bit fuzzy on Unicode normalization. I suspect that many Rubyists are.

Using normalization, you can take many Unicode strings that behaved unexpectedly in my tests and convert them into strings that play well with Ruby's string methods. However:

- The conversion isn't always perfect. Some unicode sequences will always cause Ruby's string methods to misbehave.

- It's something you have to do manually. Neither Ruby, nor Rails nor the DB normalizes automatically by default.

This article will be a brief introduction to Unicode normalization in Ruby. Hopefully it will give you a jumping-off point for your own explorations.

Let's normalize a string

The

String#unicode_normalizemethod was introduced in Ruby 2.2. Being written in Ruby, it's not as fast as normalization libraries like the utf8_proc and unicode gems that leverage C.

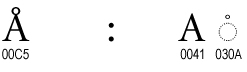

The reason we need normalization is that in Unicode there is more than one way to write a character. The letter "Å" can be represented as the code point "\u00c5" or as the composition of the letter "A" and an accent: "A\u030A".

Normalization converts one form to another:

"A\u030A".unicode_normalize #=> 'Å' (same as "\u00C5")

Of course, there isn't just one way to normalize Unicode. That would be too simple! There are four ways to normalize, called "normalization forms." They're named using cryptic acronyms: NFD, NFC, NFKD and NFKC.

String#unicode_normalize uses NFC by default, but we can tell it to use an other form like so:

"a\u0300".unicode_normalize(:nfkc) #=> 'à' (same as "\u00E0")

But what does this actually mean? What do the four normalization forms actually do? Let's take a look.

Normalization forms

There are two kinds of normalization operations:

- Composition: Converts multi-code-point characters into single code points. For example:

"a\u0300"becomes"\u00E0", both of which are ways of encoding the characterà. - Decomposition: The opposite of composition. Converts single-code-point characters into multiple code points. For example:

"\u00E0"becomes"a\u0300".

Composition and decomposition can each be done in two ways:

- Canonical: Preserves glyphs. For Example:

"2⁵"remains"2⁵"even though some systems may not support the superscript-five character. - Compatibility: Can replace glyphs with their compatibility characters. For example:

"2⁵"will be converted to"2 5".

The two operations and two options are combined in various ways to create four "normalization forms." I've listed them all in the table below, along with descriptions and examples of input and output:

| Name | Description | Input | Output |

|---|---|---|---|

| NFD | Canonical Decomposition | Å "\u00c5" |

Å "A\u030A" |

| NFC | Canonical Decomposition Followed by Canonical Composition | Å "A\u030A" |

Å "\u00c5" |

| NFKD | Compatibility Decomposition | ẛ̣ "\u1e9b\u0323" |

ṩ "\u0073\u0323\u0307" |

| NFKC | Compatibility Decomposition Followed by Canonical Composition | ẛ̣ "\u1e9b\u0323" |

ṩ "\u1e69" |

If you look at this table for a few minutes you might start to note that the acronyms kind of make sense:

- "NF" stands for "normalization form."

- "D" stands for "decomposition"

- "C" stands for "composition"

- "K" stands for "kompatibility" :)

For more examples and a much more thorough technical explanation, check out the Unicode Standard Annex #15.

Choosing a normalization form

The normalization form you should use depends on the task at hand. My recommendations below are based on the Unicode Normalization FAQ.

Use NFC for String compatibility

If your goal is to make Ruby's string methods play nicely with most of Unicode, you most likely want to use NFC. There's a reason it's the default for String#unicode_normalize.

- It composes multi-code-point characters into single code points where possible. Multi-code-point characters are the source of most problems with String methods.

- It doesn't alter glyphs, so your end-users won't notice any change in text that they've input.

That said, not all multi-code-point characters can be composed into a single code point. In those cases Ruby's String methods will behave poorly:

s = "\u01B5\u0327\u0308" # => "Ƶ̧̈", an un-composable character

s.unicode_normalize(:nfc).size # => 3, even though there's only one character

Use NFKC for security and DB compatibility

If you're working with security-related text such as usernames, or primarily interested in having text play nicely with your database, then NFKC is probably a good choice.

- It converts potentially problematic characters into their compatibility characters.

- It then composes all characters into single code points.

To see why this is useful for security, imagine that you have a user with username "HenryIV". A malicious actor might try to impersonate this user by registering a new username: "HenryⅣ".

I know, they look the same. That's the point. But they're actually two different strings. The former uses the ascii characters "IV" while the latter uses the unicode character for the Roman numeral 4: "Ⅳ".

You can prevent this sort of thing by using NFKC to normalize the strings before validating uniqueness. In this case, NFKC converts the unicode "\u2163" to the ascii letters "IV".

a = "Henry\u2163"

b = "HenryIV"

a.unicode_normalize(:nfc) == b.unicode_normalize(:nfc) # => false, because NFC preserves glyphs

a.unicode_normalize(:nfkc) == b.unicode_normalize(:nfkc) # => true, because NFKC evaluates both to the ascii "IV"

Parting Words

Now that I've looked into it more, I'm a little surprised that Unicode normalization isn't a bigger topic in the Ruby and Rails communities. You might expect it to be done for you by Rails, but as far as I can tell it's not. And not normalizing the data that your users give you means that many of Ruby's string methods are not reliable.

If any of you dear readers know something I don't, please get in touch via twitter @StarrHorne or email at starr@honeybadger.io. Unicode is a big topic and I've already proven I don't know everything about it. :)

Written by

Starr HorneStarr Horne is a Rubyist and former Chief JavaScripter at Honeybadger. When she's not fixing bugs, she enjoys making furniture with traditional hand-tools, reading history and brewing beer in her garage in Seattle.