We strive for good test coverage in our Ruby apps but that can be hard to achieve—especially when your code makes use of external APIs.

External APIs present several problems when testing. They're provided by other people, so they could change or disappear without notice. They're subject to rate limiting, outages, and connectivity issues, and they're often slow.

In this article, we'll discuss several possible ways to test code that consumes third-party APIs. We'll start with a naive approach and show how its failings lead to more sophisticated measures like manual mocking, automated mocking, and contracts.

Calling the external API in the tests

Let's start with a straightforward, naive approach. We simply use the external API in the test.

For this article, we'll use RSpec for our tests, but the same principles can be applied to other testing frameworks in Ruby.

require "faraday"

require "json"

class ExampleApiClient

def self.employees()

url = "http://dummy.restapiexample.com/api/v1/employees"

data = Faraday.get(url).body

JSON.parse(data, symbolize_names: true)

end

end

This API returns a JSON with the format:

{

"status": "success",

"data": [

{

"id": "1",

"employee_name": "Tiger Nixon",

"employee_salary": "320800",

"employee_age": "61",

"profile_image": ""

},

{

"id": "2",

"employee_name": "Garrett Winters",

"employee_salary": "170750",

"employee_age": "63",

"profile_image": ""

},

....

]

}

Now, let's test this code with a simple case:

describe ExampleApiClient do

describe 'employees' do

let(:employees_response) { ExampleApiClient.employees() }

it "returns correctly some data" do

expect(employees_response).to be_kind_of(Hash)

expect(employees_response).to have_key(:status)

expect(employees_response).to have_key(:data)

end

end

end

But, as you can see, we're calling the real API in our test.

Consequences of calling the real API in our tests

In this approach, we're using the real response from the API, so if the API changes, our test fails - which is good, but this approach has its downsides too:

- The test is slow, and if we have others like this one, the whole test suite becomes slower.

- Since every test is slow, we'll be less keen to write more tests, so we'll have less coverage in our code.

- The test might fail if there's a connectivity issue on our side.

- The API could have a limited hit rate, and our tests could fail for that reason. This is more common in development environments, like the one we'd use in our test.

- Finally, if we send requests to the real API, we might change some things that we didn't intend to, like creating unnecessary resources or deleting others.

I think there's a possibility of calling the real APIs from our tests, but for unit testing, this is not a good approach; we'll discuss this later. For now, if we shouldn't call the API from our tests, what should we do?

Alternatives to calling the real API in a test

As you can imagine, we're not the first ones to face this problem, and there are different solutions. Let's see some of them.

Manually stubbing the HTTP requests

When we have a request to an external API, we just stub the HTTP request and return what we expect in the real scenario. Let's see a quick example with [WebMock](https://github.com/bblimke/webmock, one of the most used libraries for this purpose.

Once we've added it to our project, we need to add this to our spec_helper.rb file:

# ....

require 'webmock/rspec'

RSpec.configure do |config|

config.before(:each) do

employees_response = {

:status => 'success',

:data => []

}

stub_request(:get, "http://dummy.restapiexample.com/api/v1/employees").

to_return(status: 200, body: employees_response.to_json)

end

# ....

end

And the test would be the same:

require_relative './../example'

describe ExampleApiClient do

describe 'employees' do

let(:employees_response) { ExampleApiClient.employees() }

it "returns correctly some data" do

expect(employees_response).to be_kind_of(Hash)

expect(employees_response).to have_key(:status)

expect(employees_response).to have_key(:data)

end

end

end

But the main difference now is that we're testing our code against the pre-defined response that we've set in the spec helper.

It's important to note that if we had more tests like this in a project, we'd need a better way to organize the stubs, like a dedicated file required in the spec helper.

If what we're doing here is trying to see what we needed for our test from the real API response, why don't we automatize it? That's our next approach.

Record the requests with VCR to reuse the responses

We can use a library like VCR to record the responses from the API. The first time it runs, it'll save the response for every request we have in our tests. The next time, it'll use those recorded responses, as long as we don't change the requests.

We'll go into more detail here because this approach is prevalent, and it has the advantage of saving us stubbing the requests for each test manually. Let's see how we can use it.

Getting started with VCR and RSpec

Since VCR uses a library to stub the HTTP requests, we can use WebMock. First, we can add it to our Gemfile along with VCR:

gem 'webmock'

gem 'vcr'

Now we can create a file called vcr_setup.rb:

require 'vcr'

VCR.configure do |c|

c.cassette_library_dir = 'vcr_cassettes'

c.hook_into :webmock

c.configure_rspec_metadata!

end

Then, we need to require that file in our test suite. In this case, since we're using RSpec, we can do this in our spec_helper.rb:

require 'vcr_setup'

Now, in our existing test, we can enter:

describe ExampleApiClient do

describe 'employees' do

let(:employees_response) { ExampleApiClient.employees() }

it "returns correctly some data", :vcr do

expect(employees_response).to be_kind_of(Hash)

expect(employees_response).to have_key(:status)

expect(employees_response).to have_key(:data)

end

end

end

Just by adding ", :vcr" to our "it" statement, we can use VCR. This is because we added this line to our configuration:

c.configure_rspec_metadata!

If we're adding VCR to an existing test suite with multiple tests that send requests, it's essential to add ", :vcr" to all of them; otherwise, you'll see an exception. It might sound a bit extreme, but remember that we're dealing with tests, and they're useful to notice when you're calling the external APIs.

Some tips with VCR

VCR is a mature tool in the Ruby community, so we can find great tips that might come in handy.

We can see the file where the response has been recorded (called cassette) by adding this to our test:

puts VCR.current_cassette.file

To avoid big diffs in your pull requests showing the cassettes, we can add this to .gitattributes:

* text=auto

[PATH TO YOUR CASSETTES]/**/* -diff

We can also use JSON instead of YAML for the recorded requests by adding this to the configuration:

VCR.configure do |config|

config.default_cassette_options = {

serialize_with: :json

}

end

Finally, we can use ERB in the cassettes. It's handy to include some dynamic data like a timestamp or a value.

If we have the following in our test:

require 'json'

value = [1,2,3].to_json

VCR.use_cassette('example', :erb => { :body => value }) do

response = Net::HTTP.get_response('api-example.com', '/resources')

puts "Response: #{response.body}"

end

We can use that value in our cassette:

---

- !ruby/struct:VCR::HTTPInteraction

request: !ruby/struct:VCR::Request

method: :get

uri: http://api-example.com:80/resources

body:

headers:

response: !ruby/struct:VCR::Response

status: !ruby/struct:VCR::ResponseStatus

code: 200

message: OK

headers:

content-type:

- text/html;charset=utf-8

content-length:

- "9"

body: Hello <%= body %>

http_version: "1.1"

Or we can just set it to true in the use_cassette call and use plain Ruby in the YAML, for example, if we want to use a dynamic timestamp.

<%= Time.now.to_i %>

Disadvantages (and solutions) of stubbing external requests

This level of automatization and stubbing working almost magically comes with a price. Even if we manually stub the requests as we did in the first option, the disadvantages are the same.

Since we're only recording the responses from the first time we execute the tests–or from looking at the response manually—it's possible that the API changes and our tests still pass. It's indeed a problem, but an easy solution is to have end-to-end tests to cover those cases. They should focus only on the main execution paths, giving us the speed and flexibility of using VCR.

The tests shown here are considered unit tests, where we stub an external request assuming two things:

- The format of the response from the external service won't change. We need to see this case by case, but there are big APIs out there like Github's or Stripe's that have versions, so you can be sure that as long as the API is online and you use the same version, the response follows the documentation. Of course, if the API is new and might change without notice, we can't assume this.

- The second assumption is that the API is going to be online when we call it. From the perspective of our unit tests, it's safe to assume that, but those end-to-end tests showing that the main cases are going to work in the real world are handy. Besides, you can set up alerts in case the APIs that your project depends on are down.

Other approaches: contract testing

This solution deserves its article, but the main idea is to have a "contract" between the API and the client so that you can test your code against it.

The most used library nowadays is Pact.io.

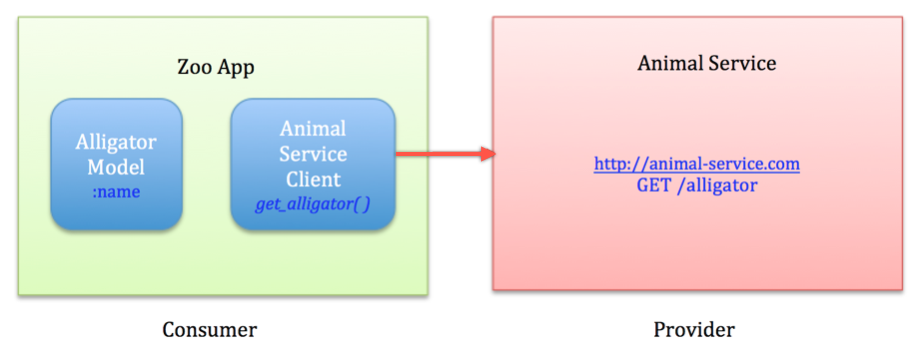

The principle is that instead of just stubbing from your side as an API client, there's a file that describes the dependency, so now you can have tests for the client (consumer) and the server (provider). This is especially useful when you control both parts, like in a microservices environment inside the same project.

For external APIs, this is usually hard to implement because you can only test one side.

Let's see a quick example extracted from the official docs where we control both sides.

Imagine we have an integration between an app, called Zoo App, and an API called Animal Service.

First steps

We can start with our model from the Zoo App (consumer) perspective:

class Alligator

attr_reader :name

def initialize name

@name = name

end

def == other

other.is_a?(Alligator) && other.name == name

end

end

And the Animal Service client class:

require 'httparty'

class AnimalServiceClient

include HTTParty

base_uri 'http://animal-service.com'

def get_alligator

# We need to implement it because we're doing TDD...

end

end

Configure the mock Animal Service

Now we can add a mock service on localhost:1234, which responds to your application's queries over HTTP, like the Animal Service would.

It also creates a mock provider object, which we'll use to set up your expectations.

# In /spec/service_providers/pact_helper.rb

require 'pact/consumer/rspec'

Pact.service_consumer "Zoo App" do

has_pact_with "Animal Service" do

mock_service :animal_service do

port 1234

end

end

end

Write a failing spec for the Animal Service client

# In /spec/service_providers/animal_service_client_spec.rb

describe AnimalServiceClient, :pact => true do

before do

AnimalServiceClient.base_uri 'localhost:1234'

end

subject { AnimalServiceClient.new }

describe "get_alligator" do

before do

animal_service.given("an alligator exists").

upon_receiving("a request for an alligator").

with(method: :get, path: '/alligator', query: '').

will_respond_with(

status: 200,

headers: {'Content-Type' => 'application/json'},

body: {name: 'Betty'} )

end

it "returns a alligator" do

expect(subject.get_alligator).to eq(Alligator.new('Betty'))

end

end

end

Run the specs

Running the AnimalServiceClient spec generates a pact file in the configured pact dir (spec/pacts by default).

The above test fails because we haven't implemented the Animal Service client method; let's implement it.

Implement the Animal Service client consumer methods

class AnimalServiceClient

include HTTParty

base_uri 'http://animal-service.com'

def get_alligator

name = JSON.parse(self.class.get("/alligator").body)['name']

Alligator.new(name)

end

end

Rerun the specs.

Green! We now have a pact file that we can use to verify our expectations of the Animal Service provider project.

We can now focus on the Animal Service (provider).

Honoring the pact file we made

First, we need to require pact/tasks in our Rakefile.

# In Rakefile

require 'pact/tasks'

And then create a pact_helper.rb in our service provider project.

# In specs/service_consumers/pact_helper.rb

require 'pact/provider/rspec'

Pact.service_provider "Animal Service" do

honours_pact_with 'Zoo App' do

# This example points to a local file, however, on a real project with a continuous

# integration box, we would use a [Pact Broker](https://github.com/pact-foundation/pact_broker) or publish our pacts as artifacts,

# and point the pact_uri to the pact published by the last successful build.

pact_uri '../zoo-app/specs/pacts/zoo_app-animal_service.json'

end

end

Let's run our failing specs

$ rake pact:verify

We now have a failing spec to develop against. Once we write the code that respects this contract, we'll have the confidence that our consumer and provider will play nicely together.

Closing thoughts

We've seen different approaches for the same problem, and—as it happens with many other things in programming—there's no silver bullet that can solve all the things without downsides.

After many years of developing software, I think it makes sense to have fast unit tests and end-to-end tests that can prove that everything connects, along with alerts that can let you know if something important in your system is down.